Artificial intelligence bill overview:

- Who: Senators Josh Hawley, R-Mo., and Richard Blumenthal, D-Conn., introduced a bill that would make social media companies liable for harmful artificial intelligence content.

- Why: As artificial intelligence content becomes more sophisticated and widespread, it poses a risk of harm to individuals and communities.

- Where: The bipartisan bill was introduced in the U.S. Senate.

A new bipartisan bill proposes to make social media companies liable for harmful content created with artificial intelligence (AI), Reuters reports.

Senators Josh Hawley, R-Mo., and Richard Blumenthal, D-Conn., introduced a bill that would waive immunity under section 230 of the Communications Act for claims related to artificial intelligence content.

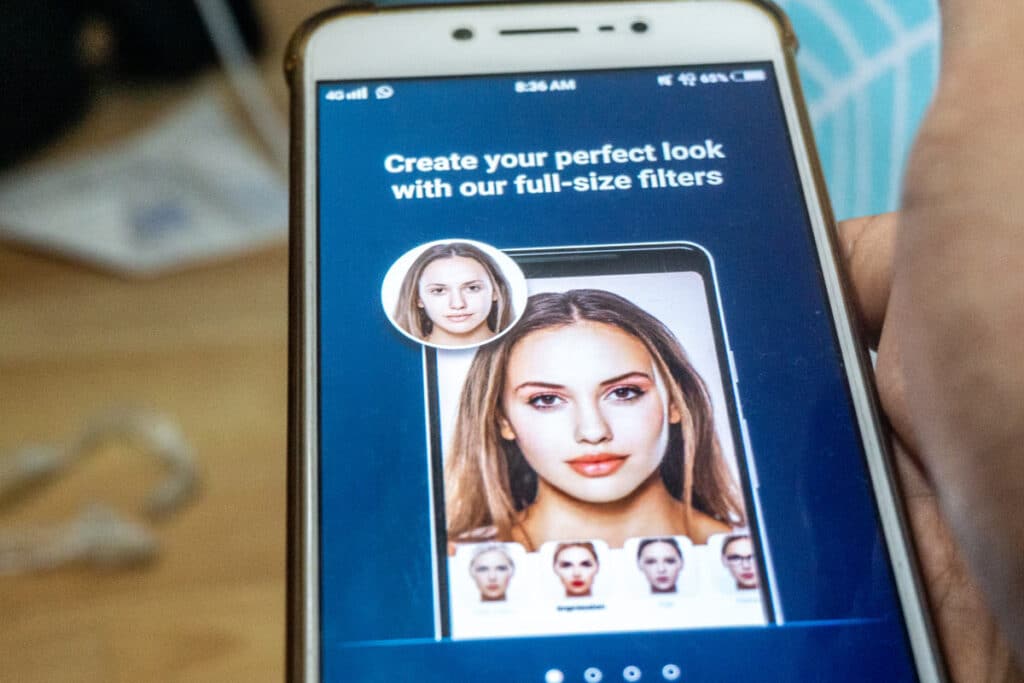

The proposed artificial intelligence bill would make social media companies liable for harmful content including realistic “deepfake” videos and photographs of real people, Reuters explains.

Section 230 provides broad protection to “interactive computer services” because they cannot be considered the “publisher or speaker” of information posted by their users, except for child sex trafficking, copyright infringement and other issues that fall into a narrow set of exceptions.

The bill defines “generative artificial intelligence” as “an artificial intelligence system that is capable of generating novel text, video, images, audio, and other media based on prompts or other forms of data provided by a person.”

Attorneys general submit comment urging accountability for harmful AI content

On June 12, a group of nearly two dozen state attorneys general submitted a comment on artificial intelligence content accountability in response to a National Telecommunications and Information Administration (NTIA) request for comment on artificial intelligence policies.

“As with other emerging technologies, a critical challenge in this area is to encourage and oversee the proper development of dynamic and trustworthy tools without hampering innovation,” the attorneys general wrote.

They say “after-the-fact enforcement is a very promising approach,” noting a strict regulatory policy may damper innovation.

The attorneys general encourage the government to evaluate and categorize the types of systems artificial intelligence content could affect, such as civil rights, physical and psychological safety, human rights and equal access to goods, services and opportunities.

They also express concern about the types of information artificial intelligence systems are able to access, such as biometric data, medical information and children’s personal information. They note artificial intelligence content may pose additional risks if it is subject to minimal human oversight.

What do you think of the artificial intelligence bill? Let us know in the comments below.

Don’t Miss Out!

Check out our list of Class Action Lawsuits and Class Action Settlements you may qualify to join!

Read About More Class Action Lawsuits & Class Action Settlements:

- Instagram’s algorithm promotes pedophile content, new report finds

- Senators introduce bill to bar social media data from being moved to certain countries

- Music publishers hit Twitter with $250M lawsuit over alleged copyright infringement

- Chase class action claims Zelle glitch caused double payment debits